Mini Project 2: Build your own AI Chatbot!

In this homework, you will:

- Build three versions of basic AI chatbot of increasing complexity.

- Write user conversation history with the chatbot to persistent storage and cache hot conversations.

Group Work

Please use the same groups you used for project 1. As before, the instructions will refer to student 1 and student 2. Please decide which of you will be which before you start.

Pre-requisites

This project will reuse concepts we have seen before: structs, HashMaps, and Option.

In addition, you will encounter Result. You will also encounter async/await. However, you wont have to use either of these concepts deeply. The instructions will give you some hints as to how to use them, but feel free to skim the above link when needed.

Part 1 - Basic Chatbot

You can find the provided stencil code for our basic chatbot here. You will need to perform the following steps:

Step 0 - Installation

Updating your GitHub fork: Whichever of the two students owned the fork of our repository in project 1 should update the main branch of their fork to match our course repository (the repository you forked your repo from). You must be able to see project_2_chatbot/basic_chatbot in the GitHub web ui for your repository after doing this.

Installation: Each student should do these steps independently on their computer.

Go to your clone of the repository, switch to the main branch, and pull the changes. Confirm that you can indeed see project_2_chatbot.

Navigate to your repository’s folder using a terminal, and then execute the following commands:

cd project_2_chatbot

cd basic_chatbot

cargo run --release --features v1

This will:

- Build/compile the stencil code. This may take up to 2-5 minutes on your computer.

- Run v1 of the chatbot for the very first time on your computer.

- Because it is the first run, the stencil code will download a Llama LLM to your computer. This may take 2-10 minutes on your computer, depending on your network connection. You will see a progress bar in your terminal showing you the progress of this download.

All in all, this may take anywhere between a few minutes to a quarter of an hour on your computer. Please do this early to avoid any surprises.

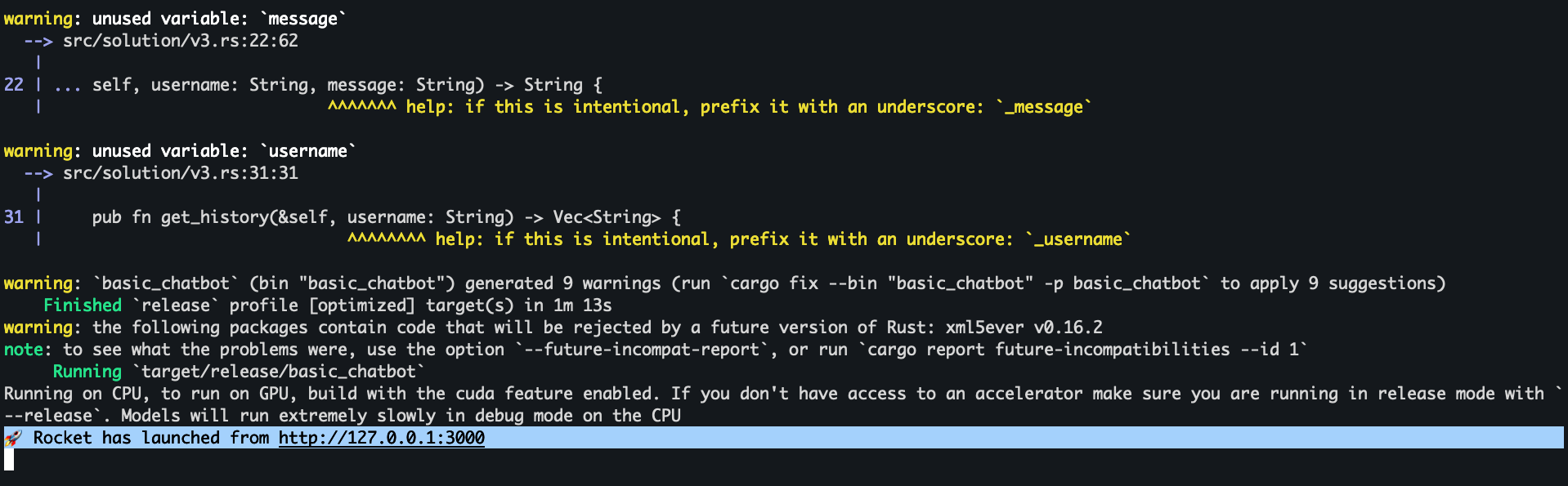

After all the steps are complete, you should see Rocket has launched from http://127.0.0.1:3000 printed in your terminal, as shown in the screenshot below.

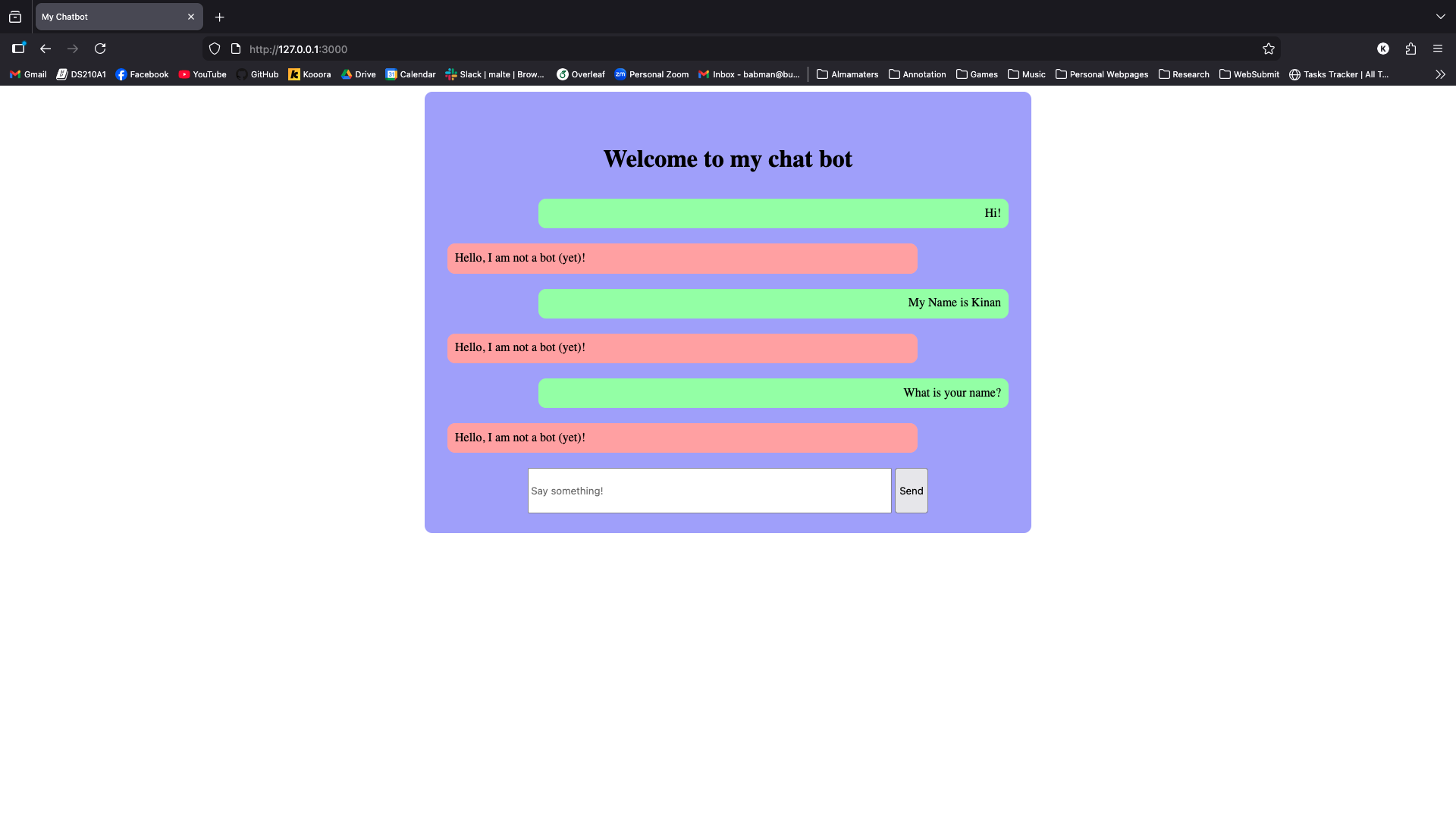

Open your favorite web browser (preferably Firefox or Chrome, but certainly not Internet Explorer or Edge,because we are not savages) and navigate to this URL http://127.0.0.1:3000. This will show you our chatbot web interface (shown below!)

First, login using any username of your choosing, then send some messages to the chatbot! As you can see in the screenshot above, the chatbot is not currently functional. Your task is to make it be a chatbot!

V1

Each student should implement this part independently. Student 1 and Student 2 should each branch of main to a new branch s1basic and s2basic, respectively. Each will work on their separate branches for now.

Open basic_chatbot using open folder in your VSCode, then navigate to src/solution/v1.rs.

You will see our provided stencil for v1, which includes:

- A

ChatbotV1struct: this represents the version 1 of your Chatbot. It stores the Llama LLM model inside of it as a field calledmodel. - A

newfunction that is a member ofChatbotV1: this function is implemented for you and the stencil executes it once when the website is launched for the very first time to construct your chatbot. - A

chat_with_userfunction that is a member ofChatbotV1: you will have to implement this function. - Several

#[allow(dead_code)]: this tells the Rust compiler not to show you irrelevant warnings if you choose to use a different version of the chatbot later on. You can ignore this.

The provided stencil code uses an external library called kalosm (see line 1). This is a library for managing

and using LLMs from Rust. The Rust compiler already installed this library for you automatically during step 0.

Let’s look a little more carefully at chat_with_user:

async: this function signature (line 15) uses pub async fn instead of pub fn which we are familiar with. This means that this function is an asynchronous function. Essentially, this tells Rust that this function may take a long time, and so while it executes, Rust can run other functions along side it.

We have to use async because kalosm is an asynchronous library. The developers of kalosm decided this because invoking an LLM takes a lot of work and time, especially on regular computers and using CPUs.

Thus, the kalosm developers wanted to allow applications to perform other tasks in the meanwhile, in order to save some time.

message: String: the chat_with_user function receives two arguments. The first is self, representing the instance of ChatbotV1 this function is called over (we have seen this before with project 1 and structs). The second is message, which contains the message the user wants to send to the chatbot and LLM.

You can confirm this by adding println!("{message}");, re-running the chatbot using cargo run --release --features v1, and then sending some messages and looking at the printed output in the terminal.

chat_session: This variable contains a Chat object. This is the interface/type that the kalosm library provides to manage and use chat sessions with the LLM. The exact type of this variable is kalosm::language::Chat<Llama>, which indicates that it is a chat session with a Llama LLM, but you can skip the kalosm::language:: part and write Chat<Llama> instead, because of the use statement on line 1.

with_system_prompt: lines 16-18 show you how the chat_session variable is initialized. We create a new chat model from the llama model we had previously stored inside self (when the Chatbot was first created using new). We then configure it to use the system prompt The assistant will act like a pirate (because I like pirates).

Your task is to complete the implementation of chat_with_user by passing the user-provided message to the chat session, and then retrieving and returning the LLM response.

We suggest you look at the add_message function provided by the kalosm library. Look at the given example for how it can be used.

The add_message function returns the response asynchronously. You can instruct Rust to wait until that entire response is ready by invoking .await on what it returns. For example

#![allow(unused)] fn main() { let asynchronous_output = chat_session.add_message(...); let output = asynchronous_output.await; // notice lack of (), await is not a function; it is a special keyword! }

Look at the type of output. How can you extract the response message string from it? Hint: it is similar to (but not exactly the same as) Option and can be dealt with using the same approaches we have seen before for Option.

Testing your chatbot: use cargo run --release --features v1 to test your chatbot. Send different messages and see if your chatbot behaves normally. Feel free to change the system prompt so the chatbot is something other than a pirate if you would like.

What happens if you ask the chatbot about something you or it had said earlier? Try telling it your name then ask it to repeat it later and see what happens.

Merging your code: After both students finish v1, student 1 should create a pull request using GitHub’s web UI from s1basic to s2basic. Student 2 should review the pull request. The pull request will contain a lot of conflicts, as both students have worked on implementing the same function.

Feel free to use GitHub’s UI to comment on the pull request and discuss each other’s code. Decide which version you like the best together (this might be subjective). Student 2 should then perform the merge into s2basic, resolving any conflicts in accordance with your decision. You will be graded on your comments during code review.

Feel free to use either the terminal, VSCode Git plugins or other Git UIs, or the GitHub web UI to do this as you see fit. You can ask AI for help with this process.

V2

All students should work on this part together on the s2basic branch after the pull request has been merged.

When testing V1, you would have noticed that the chatbot has no memory! It does not remember anything you said before. There is a good reason for this: whenever you send a new message, the stencil code calls chat_with_user. Each of these call creates a new chat_session – a blank slate! It then adds the new message to that session but then discards the session as it goes out of scope.

To make sure the chatbot has some memory, you will need to retain the chat session (notably, the message history inside it!) between calls to chat_with_user. You do not want to lose the chat_session from previous calls, instead, you want to reuse it.

Navigate to src/solution/v2.rs in VSCode. You will notice that this stencil is a lot more empty than the previous one: this is by design. It gives you the freedom to implement the chatbot anyway you like, and importantly, the freedom to store whatever you want inside the ChatbotV2 struct.

All students should discuss their ideas for this part together, and decide what type to store inside ChatbotV2. Then, they should find a way to initialize that data correctly inside the new function. Finally, they can copy in the implementation of chat_with_user from v1, and adapt it to the new struct in v2.

Testing your solution: Use this command to test your solution:

cargo run --release --features v2

You should test to ensure that your chatbot remembers things you had said previously.

Additionally, do the following test. Run the chatbot, open your browser and navigate to the chatbot page, then login using your name and tell the chatbot something about you: your name or favorite color, etc.

Then, in a new tab, navigate to the chatbot page again, and log in using a different username, for example, Sophie. Do not tell the chatbot anything. Instead, just ask it for your name or favorite color. See what happens! Is this behavior good behavior? Can you think of any problems that may arise from it in practice?

V3

As your testing in v2 should have shown: your chatbot now has memory, but it cannot distinguish between the histories of different users. Instead, it is all mashed in together. In part 3, your task is to separate or isolate this memory by user.

Navigate your VSCode to src/solution/v3.rs. You will notice that this stencil code is also nearly empty, giving you freedom to store and manage whatever data and state you want within the ChatbotV3 struct. You will also notice that chat_with_user here takes one extra argument: username.

All students should discuss together what kind of data they want to store in the struct and modify the struct definition and the new function together accordingly. One of them should push this code to s2basic, and then student 1 should merge that with s1basic. Confirm that you can see all of the commits from v2 and v3 (so far) on that branch after merging.

Hint: do you think you can achieve the desired functionality while storing only one chat session? Do you need more sessions? How many do you need? Hint: should there be some linking between the username and the corresponding chat session?

After completing the struct definition and new function, student 1 and student 2 should complete chat_with_user and get_history independently on s1basic and s2basic.

Student 1: you need to retrieve the correct chat session. After that, you should add the message to it and retrieve the response similarly to v1 and v2.

Hint: what if this is the very first message a user sends? Hint: what if this is the second (or later) message a user sends?

You can test your code using the same workflow as v2: login as two users from two different tabs and see if the chatbot leaks information about one user to the other. Note: your chatbot should still remember information across sent by the same user/in the same tab.

Student 2: you need to retrieve the chat session as well. Then, rather than adding a message, you need to retrieve and return the history of the user’s conversation so far as a vector of strings Vec<String>.

We suggest you look at the session function from kalosm and its history function. Look at the examples provided by kalosm in the two links above for inspiration. Consider printing the history using println!("{:?}", YOUR HISTORY VARIABLE);.

This function’s purpose is to display the history in the UI after a user logs back in (see property 3 in the description below). Thus, this cannot be completely tested without also having your teammates implementation of chat_with_user completed.

Merging, Testing, and Submission

After both students are happy with their respective functions in v3, student 1 should create a new branch basic_submission based off s1basic. Student 2 should then merge s2basic into basic_submission.

After the merge, the basic_submission branch should contain all the code for v1, v2, and v3 including both chat_with_user and get_history.

Each student should do some manual testing by running the below command and opening several tabs to chat with the chatbot concurrently:

cargo run --release --features v3

The students should verify that the v3 solution meets these properties:

- The chatbot never reveals information given to it by one user to a different user, no matter how persuasive that second user is.

- The chatbot remembers earlier information given to it by the same user. If it does not,

chat_with_userhas some bug that needs fixing! - After you chat with the chatbot as some specific user, say

Sophie, you can refresh the page (or open a new tab) and login in as that same user again, and you will see all of your previous messaging history in the page. If this does not work, thenget_historyhas some bug that needs fixing!

Students should feel free to apply fixes to their code and push it to basic_submission, but should coordinate with each other to avoid trying to apply the same fix at the same time.

It is also a good idea to run v1 and v2 one or two times again after you are done with all changes, just in case.

When you are done, student 1 can submit your solution via Gradescope. Make sure you add your teammate to the submission as a group submission.

Part 2 - Storing and Caching History

In the previous part, you implemented a chatbot that keeps track of the conversation history for independent conversations by independent users separately.

However, one down side of the v3 implementation is that if the Rust application is terminated, i.e. by closing the terminal you ran it from, and then restarted again (by calling cargon run ...), the entire history is lost.

The reason this happens is that the history is saved inside your chatbot struct (and more specifically, inside a variable in the stencil code that contains an instance of your struct). Like all variables in any program, this data is lost when the program is closed or terminated.

To fix this, we will need to save the data to a file, so that it survives the program even if the program is terminated, and then read the data from that file in future executions of the program.

Git instructions: We will not tell you what to do with Git, branches, or merges. Instead, we will leave it up to you to decide how to proceed. Just make sure that (1) you start by branching out from your basic_submission from Part 1,

(2) you DO NOT modify basic_submission, and (3) you end up with the complete tested code from all students on your team on a branch called complete_chatbot.

V4 - File Chatbot

Start by implementing the missing code in file_library.rs and v4.rs, both located under file_chatbot/src/solution/. Then comments in the code point you towards helpful functions provided by the Kalosm

library for writing and loading a LlamaChatSession to and from bytes. The comments also point you to fs::write and fs::read for writing and reading bytes to and from a file, respectively.

Student 1 should implement chat_with_user and save_chat_session_to_file. Student 2 should implement get_history and load_chat_session_from_file.

You will be able to test your code when all students are done and you have combined your implementations. Then you can do the following flow for testing:

cd file_chatbot/

cargo run --release

# after the program starts running, open http://127.0.0.1:3000 in your browser, log in as your user of choice, and chat with the bot.

# after you are done chatting, kill the previous command using either ctrl+c or by closing the terminal

# run the below command again to start the chatbot from scratch

cargo run --release

# open http://127.0.0.1:3000 in your browser, log in as the same user, you should see your history from the previous run and the chatbot

# should be able to remember information you told it earlier.

# if you log in as a different user, you should not see that history and the chatbot should not be able to remember any facts from it

All students should fix any issues they encounter while trying out the chatbot together. When you are happy with the chatbot and it appears to work as expected, run the following commands:

cd experiments/

cargo run --bin experiment1 --release

The code of this experiment is provided to you. It chats with your implementation of V3 and V4. After some chatting. It will retrieve the history (which calls get_history(...) behind the scenes) for both V3 and V4, and it will print the time

it took to retrieve the history in both cases.

Identify which version is slower! Try to reason about why.

V5 - Cache Chatbot

The above experiment should convince you that reading and writing to a file is actually a lot slower than interacting with data stored inside a variable!

If computer memory was unlimited, one could just keep (a copy of) all the conversations in the memory of the program. However, memory is limited, and if there are thousands or millions of users, the conversations would easily fill up the memory.

A common alternative that programmers use is to employ caching: The overall idea is to keep only the needed conversations in the program’s memory (e.g., inside a variable or data-structure of some kind, like a HashMap or a Vector)

One challenge is it is difficult to predict with certainty which conversations are going to be needed in the future, and which ones are not going to be needed. Instead, we have to try to make guesses. Specifically, to guess which conversations should be cached (kept in memory) and which should not be (not kept in memory, and instead have to be read from files).

A popular and easy to understand caching strategy is to least recently used (LRU). Here, if the limit on cached conversations is reached, the next time we see a new conversation that we need to cache, we would need to (1) make space for it by kicking out a previous conversation (often called evicting) and (2) put the new conversation in its place.

So, which conversation should we kick out? The LRU strategy is to kick out the least recently used conversation. You can see an example of this in action at this link. Feel free to skip over the parts titled Thoughts about Implementation Using Arrays, Hashing and/or Heap, Efficient Solution - Using Doubly Linked List and Hashing, and Time Complexity.

We will implement this strategy inside cache_chatbot. You are given a fast and correct implementation of an LRU cache (you can look at it inside cache_chatbot/src/solution/fast_cache.rs). You are also given the basic structure for the chatbot in cache_chatbot/src/solutions/v5.rs.

Crucially, note that ChatbotV5 struct contains the Llama model (which you can use to create Chat objects, same as previous parts) and a cache where you can cache conversations. Your tasks is to implement chat_with_user and get_history.

Specifically, both of these implementations first try to find the relevant conversation in the cache – this code is already given. There are two cases:

- the conversation is found in the cache, which returns the underlying

chatobject. You can use that chat object directly to retrieve the history or add a new message. - The conversation is not the cache. In this case, you will need to create a new

Chat, and read its content from a file (if one exists) or initialize it as an emptyChatif none exists.

Either way, this conversation now becomes the most recently used one, and you must therefore add it to the cache, including any new messages sent to or received from it.

Student 1 should implement get_history and student 2 should implement chat_with_user.

Testing your code: ChatbotV5 is configured to keep no more than 3 conversations in the cache. You should test your code to ensure the following behavior is true:

- Open 3 tabs and log in as 3 different users, after the first login, they must all get inserted into the cache, and continuing to use them should not require reading from files after the first read.

- Open a 4th tab and log in as a new user and begin chatting. The least recently used of the three earlier conversations should get removed from the cache, and the new conversation should replace it.

- If you login or interact with a conversation that was removed from the cache, your code will read it from its corresponding file, and then put it back in the cache.

Hint: feel free to use print statements in your code to identify which cases (e.g., chat found or not found in cache) are being execute.

Hint: make sure you always write the new conversation session to the corresponding file, so that if the conversation ever gets evicted from the cache in the future, it will be stored / backed up in the file.

Finally, run the following commands to see how much time caching saves compared to V4:

cargo run --bin experiment2 --release

Building your own Cache

We gave you an implementation of LRU cache using a Rust library. In this part, you are asked to implement your own LRU cache using a HashMap and a vector. This implementation will be slower than the cache given to you, but it is a good learning experience!

You should add your implementation to cache_chatbot/src/slow_cache.rs. The file also includes instructions on how to implement it. Read the file slowly and try to take it in.

student 1 should implement remove_least_recently_used, and student 2 should implement mark_as_most_recently_used.

Testing your solution: After you are both done with your implementation. All students should first run these commands:

cd cache_chatbot

cargo run --bin slow_cache

This will run the main file inside cache_chatbot/src/slow_cache.rs, which contains some basic tests. If it produces errors, use the printed messages to help you debug your implementation.

Then, all students should run our provided tests (against which you will be graded) using:

cd cache_chatbot

cargo test

If all tests pass, your final step is to run our cache experiment:

cd experiments

cargo run --bin experiment3 --release

This experiments compares the performance of the given LRU cache and your HashMap+Vector LRU cache. Which one is faster?

Submission Instructions

After you are done, make sure all your code is on a branch called complete_chatbot.

Please make sure that v4 and v5 behave as expected, and that you are able to run experiments 1, 2, and 3. Finally, make sure that the slow_cache tests run.

Then, student 1 should submit the GitHub repo and branch to Gradescope. Remember to add all the students on your group as teammates.